VoxCroft’s Hate Speech Toolkit: Preventing Genocide Through Early Detection of Inflammatory Rhetoric

January 10, 2024 · 4 min read

-

A genocide is the deliberate killing of a large number of people from a specific nation or ethnic group with the aim of destroying that group. Over the last year, VoxCroft has observed violent activities in several African countries that – if left unchecked – have the potential to escalate into full-blown genocides.

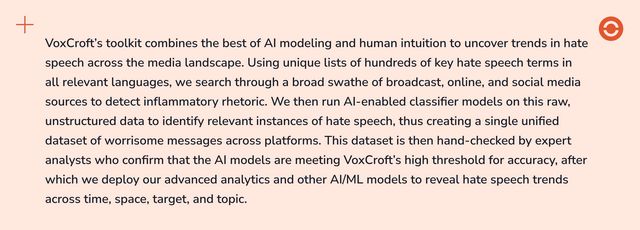

The poor moderation of social media platforms and the ease with which conversations can be amplified make these mediums a preferred platform for bad faith actors to incite distrust and call for violence against specific targets. The early detection of media narratives that incite violence against ethnic, religious, or politically-aligned groups is pivotal in de-escalating incipient genocides. VoxCroft has successfully employed its population-centric analytical toolkit to

- detect potential vectors for inflammatory rhetoric,

- assess the severity of the language employed, and

- map the extent to which these networks can trigger violent activity.

Continued Ethiopian Conflict Triggers of New Inflammatory Networks

In November 2022, a ceasefire agreement officially brought to an end the Tigray War, a two-year conflict between Ethiopia’s government-backed troops and the Tigray People’s Liberation Front (TPLF). However, VoxCroft observed that government-controlled media vitriol directed toward the TPLF simply moved from traditional online media to social media platforms, spurring reciprocal inflammatory rhetoric among the various political and ethnic groups. This was boosted by newly created bot accounts.

VoxCroft analyzed user comments on the Facebook page of a state-owned media house and found that 21 percent of these contained inflammatory rhetoric. Some targeted Prime Minister Abiy Ahmed and the Ethiopian government, describing him as a “Nazi” and the administration as a “genocidal” regime. The hate speech also extended to Ethiopia’s other ethnic groups with the TPLF described as a “cancer” and calling for officials of the Oromo-ethnic group to “be punished by death.”

These examples of inflammatory rhetoric indicated that deeply-entrenched distrust was not resolved by the November 2022 peace agreement, and indeed, renewed fighting between government forces and ethnic-backed political groups continued throughout 2023.

Social Network Analysis Reveals Hyperactive Bad Faith Actors in Sudan

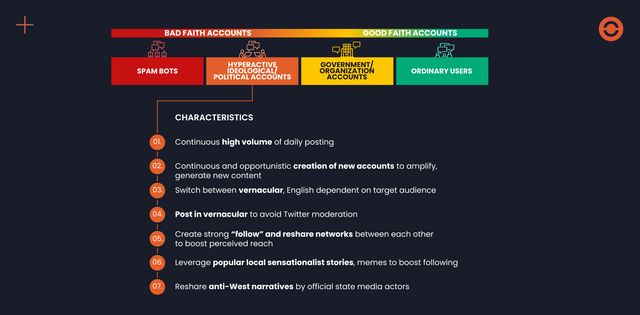

The authenticity of social media accounts is often hard to establish, increasing the potential for the dissemination of dis/misinformation. To better understand the provenance of these messages, VoxCroft employs a proprietary algorithm to detect inauthentic social media activity pushing inflammatory narratives.

In the aftermath of the breakdown of Sudan’s transitional government in April 2023 and the emerging conflict between the Sudanese Armed Forces (SAF) under Abdel al-Burhan and the paramilitary Rapid Support Forces (RSF) under Mohamed Hamdan Dagalo – known as Hemedti – VoxCroft noticed an increase in the number of hyperactive ideological social media accounts that targeted the youth on platforms such as TikTok to elicit their support for either the RSF or the SAF.

Hyperactive accounts, defined as automated or human-driven accounts that post multiple posts a day on the same topic to artificially inflate the visibility of a particular narrative within the wider network, can distort public perceptions of an issue. In the case of Sudan, an SAF network began to employ inflammatory rhetoric in their posts. For example, one user posted a call for the “SAF to take over Khartoum and purify it within 24 hours.

Social Media Hate Speech Goes Mainstream in Democratic Republic of the Congo

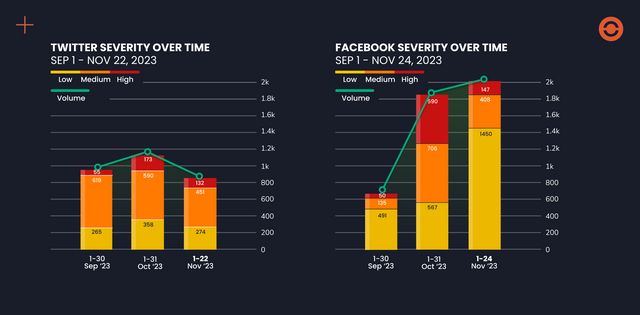

Triggers can inflame existing tensions and low-level inflammatory rhetoric can escalate beyond social media spaces and enter the broadcast and online media, with the potential to reach new audiences and evolve into concerning levels of hate speech.

A recent review of Congolese social and traditional media showed that armed engagements between the M23 rebel group and the Congolese Armed Forces (FARDC) – together with the foreign entities that have become involved in the various sides of a multi-year conflict in the eastern DRC – received prominent attention in social media conversations and played into users’ perceived allegiances of political candidates in the December 2023 presidential election.

VoxCroft’s online, broadcast, and social media automated hate speech detection algorithms tracked how ethnic slurs prevalent on social media against the M23, many of whom are of Tutsi origin, also appeared in campaign speeches by presidential candidates and in radio news and talk show programs. Social media posts prior to the election period questioned the nationality of Tutsis with Congolese citizenship, saying they belonged in Rwanda. Social media users also posted videos, which they cited as proof that Rwandan troops were fighting alongside the M23 and claimed that Rwandan president Paul Kagame is “the enemy of the DRC.”

During a campaign speech, Congolese president Felix Tshisekedi – seeking reelection – likened Kagame to Hitler, an incident that prompted prominent reports on various radio stations and newspapers. VoxCroft also observed an increase in the severity of hate speech directed at Tutsis and Rwandans, with words such as “vipers” and “vampires” being used.

Left unchecked, hate speech can increase in temperature with consequences for the communities targeted by these statements. VoxCroft noted that in the DRC this took the form of lynchings. In Ethiopia, social media users recommended the killing of named individuals.

VoxCroft works closely with humanitarian organizations, aid agencies, and international mediation teams to identify the source of inflammatory rhetoric and assess its severity and potential for escalation. If these threats are identified early enough, there is a greater potential for goodwill to stop or reverse potential genocides.